How to replace your engineering team with AI

Ultimate guide to context engineering. Build any production ready app or website in minutes with an army of AI-engineers. No coding skills required.

A week ago Sam Altman dropped a cryptic post in his X account.

This was mere days prior to ChatGPT-5’s launch.

But the reality of what happened really sunk in only after GPT-5 became publicly available.

Influencers started showing the crazy stuff that’s possible.

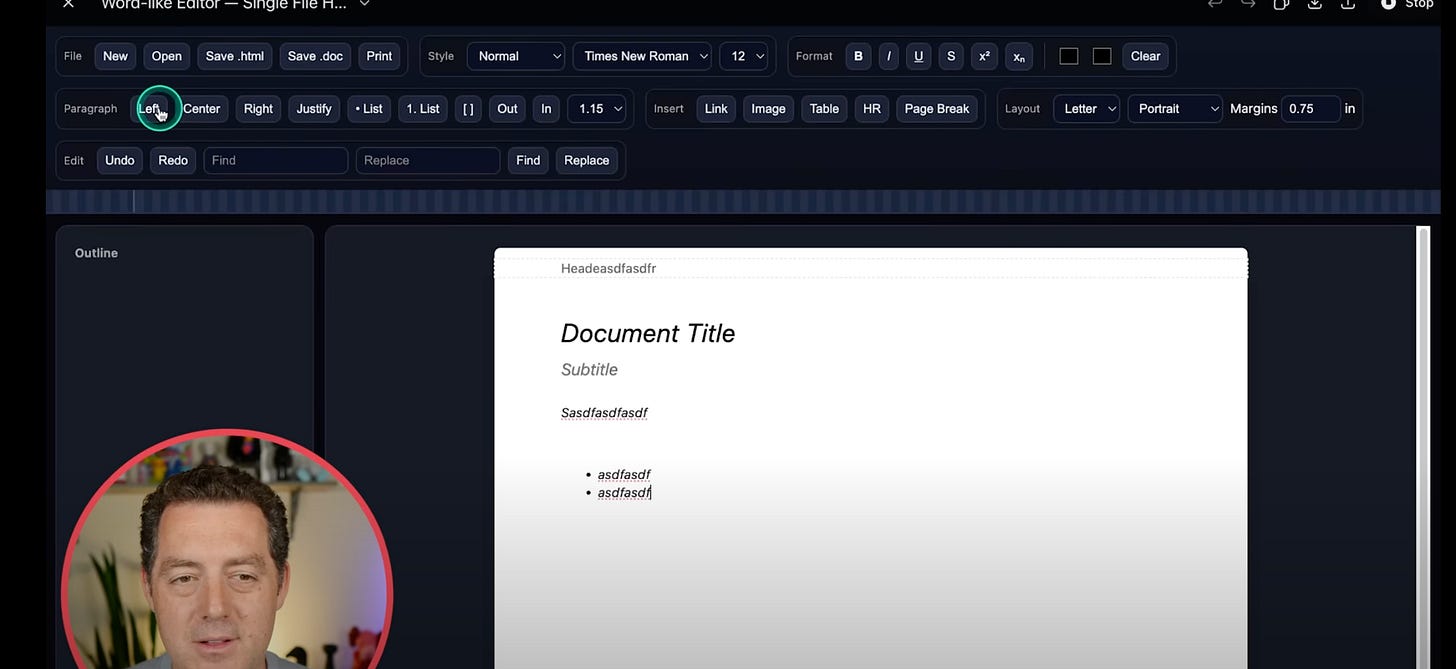

Matthew Bernan has built Microsoft Word and Excel clones from a single prompt.

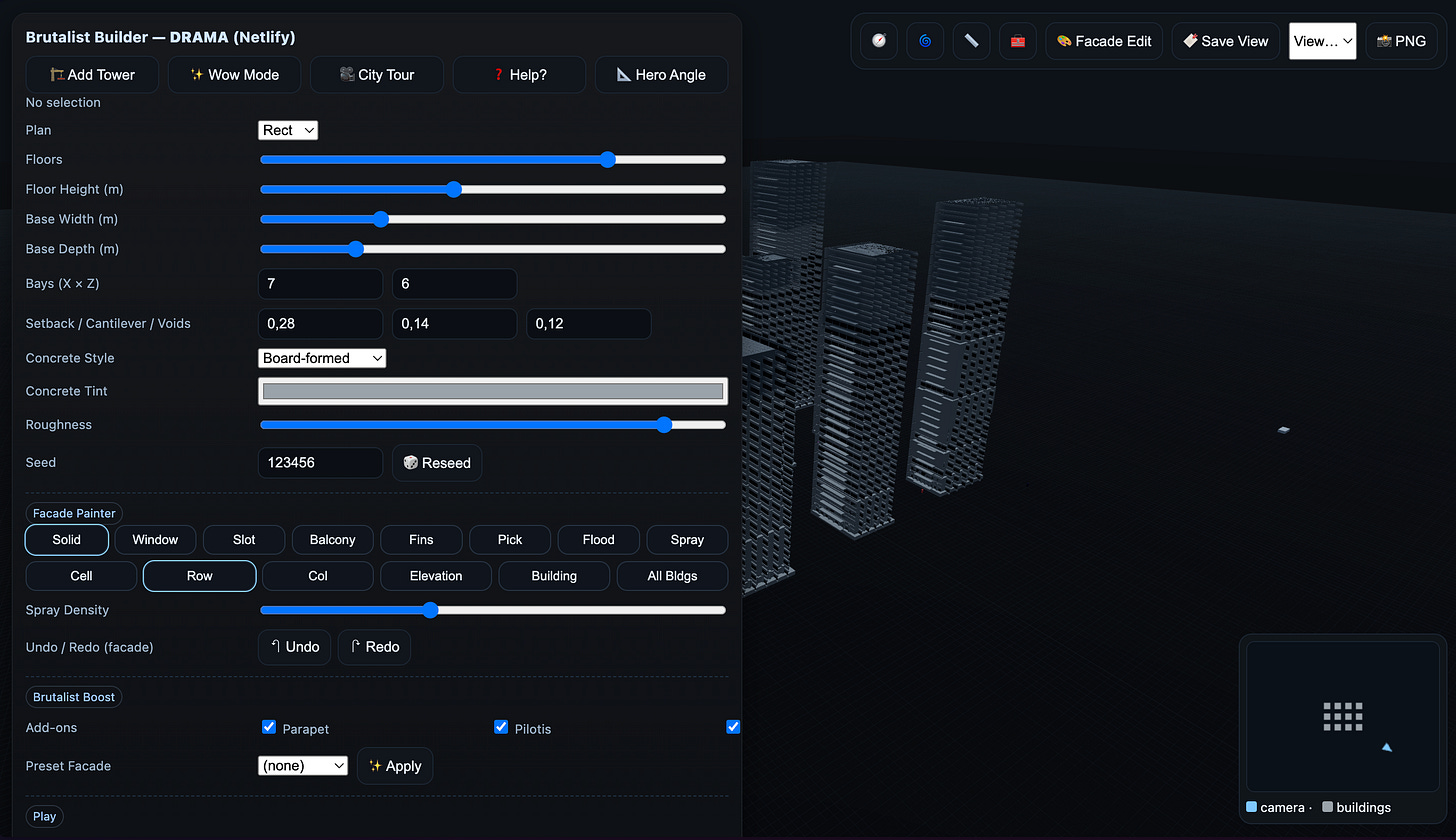

Wharton’s Professor Ethan Mollick spun a highly configurable “Brutalist Building Simulator” from a single line.

There are dozens of similar examples scattered all across the web.

I’ve started playing around with high-complexity projects as well.

What surprised me was: (1) the quality of UI/UX output; (2) nuances.

ChatGPT thinks through the entire project and adds details or even use cases you might have overlooked (e.g., city selector dropdown, pickers, etc.).

But the more I stretched the model, the more the limits became obvious.

GPT-5 was cutting corners (defaulting to super basic MVPs), changing tech stacks out of the blue, and errors started popping up and so on.

In short, the model was missing valuable context that would keep the project consistent across multiple prompts.

Thankfully, there’s a solution that has generated a lot of hype — it’s called “context engineering.”

I’ve dived deep into that rabbit hole to get you up to speed on it. What this cryptic term means and how to set it up in minutes.

I promise that in 10–15 minutes you will have a fully-fledged engineering team of AI agents up and running.

Hope you’ll be as amazed as I was when I first saw this and realized the possible implications.

🧬 Article Structure

The LLM attention quirks. Importance of context. Never say “I can’t”. Attention noise.

Context Engineering. Context Engineering explained simply. The core principles behind it.

🔒 Building your agentic AI engineering team. Choosing an IDE, setting up context specifications. Spinning up engineering agents. Making iterations. + Includes a downloadable project you can use and start building immediately.

📏 The LLM attention quirks

The importance of context

According to a study, when the requirement is underspecified, LLMs tend to guess—up to ~41% of the time. Even tiny prompt changes are ~2× more likely to regress and can substantially reduce accuracy (up to ~20%).

You may have noticed this when trying to vibe-code. The more ambiguous the prompt is, the less likely the output will be sustainable. The tech stack might suddenly shift; new libraries get included; classes are rewritten. This can happen to the point where you have to rebuild your entire environment.

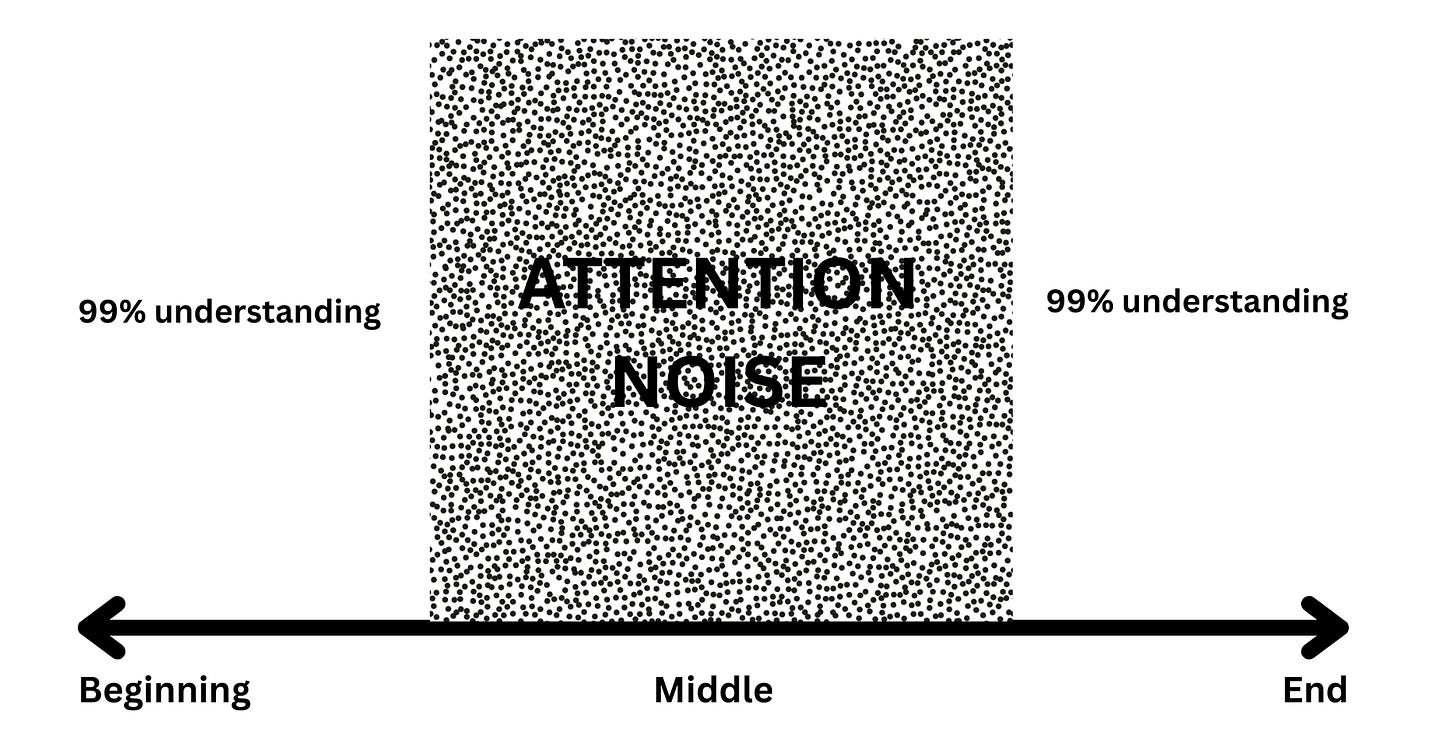

Where you place the context in the prompt is crucial to the outcome.

Despite long context windows, LLMs are still not great at catching details hidden in longer texts. They can predict the probability of the second word next to the first, but when it’s a 100k-word passage—that’s when things start getting shaky. A study has shown that long prompts with context hidden in the middle lead models to underuse those details.

In another related study, LLMs that were fine-tuned to ask clarifying questions at the beginning of the prompt beat generic base models in accuracy by as much as ~39%.

Just remember: must-use facts and pieces of context must always be placed at the beginning or at the end of your prompt.

Never say “I can’t”

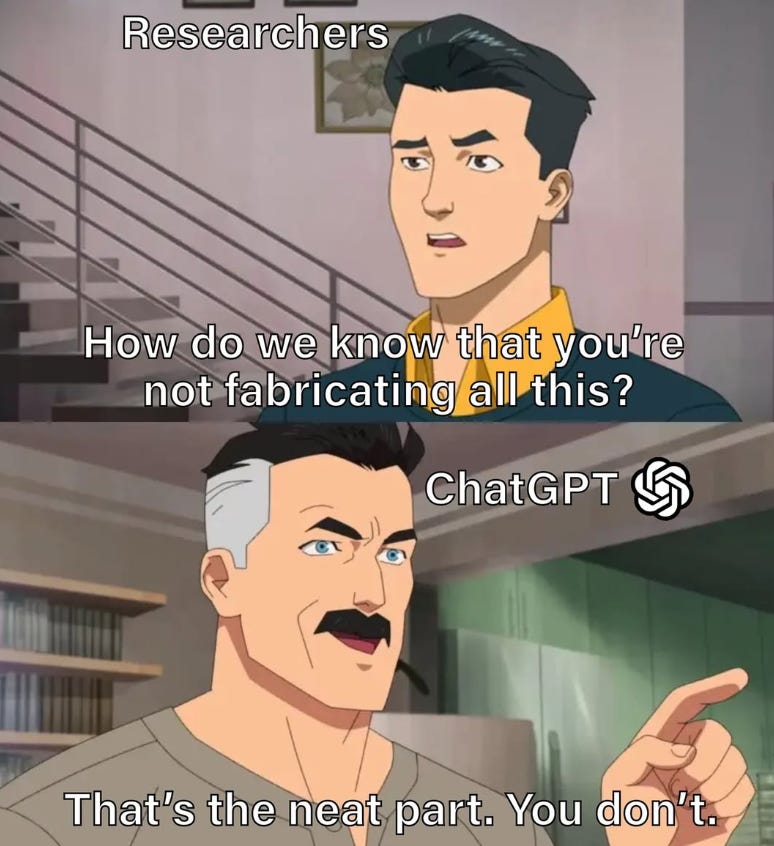

The existing behavior patterns ingrained in most LLMs bias them towards “guessing” instead of saying “I can’t answer or I don’t know how”.

The study of top-20 LLMs have shown that abstention (ability to accept your knowledge limits) was extremely low across all.

In practical terms this means that a model is x10 more likely to hallucinate the output than to say that it can’t.

The more context details you omit in the prompt, the more hallucinations you invite.

Attention noise

Earlier studies with ChatGPT-3.5 and 4o have shown that even punctuation can lead to ~50% of output fluctuations. This has improved with more advanced “chain-of-thought” models, but the “attention noise” phenomenon remains.

Even if you break your whole project into pieces and iterate on each piece with the LLM, there’s always a chance that slightly different sentence framing will lead to “model’s distraction” (Apple has written extensively about this). You’ll end up with a patchy project that takes hours or days to debug and get up to speed.

🛠️ Context Engineering

The concept

Now you understand why framing the context and placing it in the right part of your prompt is so critical for LLMs.

That also explains why most vibe-coding efforts are nothing more than pet projects that are more expensive to scale than to build.

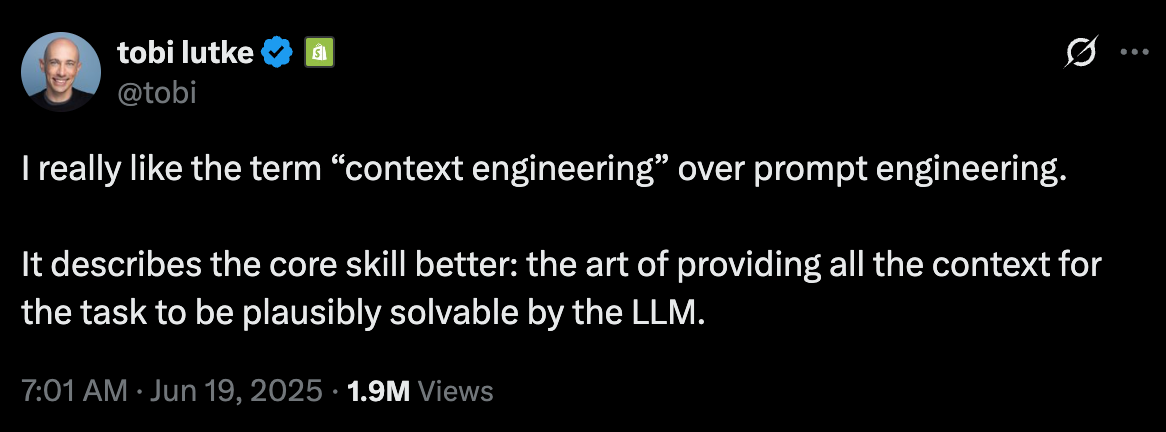

Now a new concept comes onstage. It’s called “Context Engineering.”

The term was initially coined by Tobi Lütke in one of his viral X posts.

It was further picked up by Andrej Karpathy, making it an “industry-standard” term.

If I were to put it in a specific definition - context engineering is a framework where you provide the model with the neccessary requirements, tools to execute them and guardrails within the context itself.

Core Principles

Before we dive deeper into the actual implementation, here are the core principles your environment needs to follow.

Front-load the non-negotiable context.

If you’re building a project, provide a clear description of what you want. But if you’re lazy (like I was when working on it), you can delegate this to your AI agents. Wrap up the description, figure out the minimum tech stack, etc.Decompose big tasks.

You can only eat an elephant one piece at a time—and the same logic applies to your AI agents. Employ an agent that will break down a large project into granular tasks for the rest of the AI team. Each task will serve as context for the prompt.Give the model a role and scope.

When you set up a team of AI agents, provide a role (e.g., senior frontend engineer) and links to your specifications.Add guardrails.

You don’t want LLMs to hallucinate design requirements, file names, or project structures. Constrain them to avoid attention noise and its side effects.