The Ultimate Guide to Claude Code Skills

The "hype free" advanced guide to skills + the only skill library you'll need.

Last week, I stumbled upon a clickbait post.

“Install these 47 skills,” the title read.

“Your Claude Code is basically lobotomized without them” was the subtitle.

I installed all 47. Tested every single one against vanilla Claude Code output. Forty of them made the output worse.

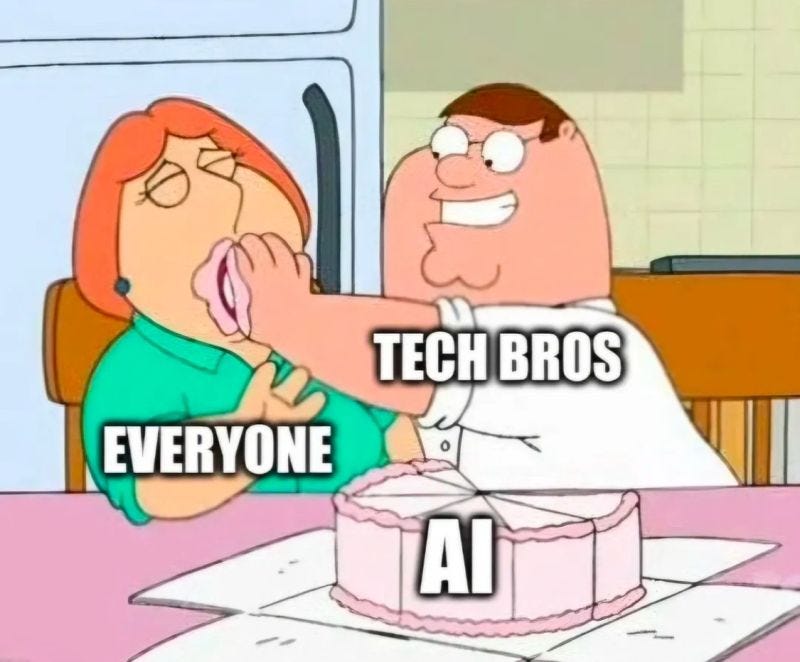

There’s so much tech bro hype around skills that it’s almost impossible to separate the wheat from the chaff.

Skills here. Skills there. The ultimate repo. The skills marketplace.

“I’ve automated my life with these skills” or “I’ve built an entire agency from this set of skills.” You know the vibe.

Few actually understand what skills do - and more importantly, what they don’t.

I spent two weeks going through 200+ skills across dozens of repositories. Installed them. Tested them. Benchmarked them against vanilla Claude Code output.

And I came out the other side with a very different picture than the Twitter hype machine would have you believe.

Most publicly available skills don’t just fail to help. They actively hurt - adding tokens, adding latency, injecting constraints that make the output narrower.

Most are written by people who’ve never done the job they’re trying to automate - I found a “CFO review” skill by a developer who thinks EBITDA is a type of pasta, a “Senior Product Manager” skill that doesn’t mention a single prioritization framework, a “UX Researcher” skill that’s never heard of a screener survey.

But that remaining 20% - the skills built by people who actually know the domain, who’ve iterated on evaluation, who’ve tested edge cases - can produce output that’s measurably sharper and more consistent than the generic model. I ran the benchmarks. The difference is real.

Everything else I’ve read on skills is either hype or documentation. This is neither.

This is the only guide you’ll need on the topic.

🧩 Skills, explained simply

Skills are prompt injections. That’s it.

They’re markdown files that sit in your ~/.claude/skills/ directory and get loaded into Claude’s context when triggered. They steer the model’s behavior - its tone, its structure, its priorities, its output format. Nothing more magical than that.

Claude Code without skills is a brilliant generalist with no memory of your preferences. That new hire who’s terrifyingly smart but asks you how you like your reports formatted every single Monday.

Do skills actually improve your agents output?

My OpenClaw assistant manages my calendar. When I ask him to reschedule a meeting - without a skill - he completely butchers it. He’ll pick a random duration. Lose half the participants. Forget the agenda. Drop the Google Meet link. And sometimes - my personal favorite - he’ll delete the original meeting entirely.

For a human PA, rescheduling is thoughtless muscle memory. For the most advanced LLMs on the planet, it’s apparently a Herculean challenge.

The meeting was technically rescheduled. The agent marks the task as complete. Mission accomplished. Champagne all around.

Except your 3pm with the VP of Engineering is now a 45-minute phantom meeting with no attendees, no link, and no agenda. Good luck explaining that one.

Skills let you define the success criteria the model doesn’t know to care about. Not just “reschedule the meeting” but “reschedule the meeting while preserving every participant, the original agenda, the conferencing link, and the correct duration.”

It gets sharper with higher-stakes tasks.

I built a “business model reviewer” skill that forces the model to drill into unit economics before anything else. Without the skill, Claude Code gave me a balanced, polite, utterly useless overview. With the skill, it caught that a $39/month SaaS couldn’t cover its own compute costs per user. That’s the difference.

⚠️ The problems nobody talks about

Skills are not deterministic

This is the big one. You can write the perfect skill, install it correctly, name it beautifully - and Claude Code might just... not use it.

Skills sit in a list that Claude sees in its system prompt. When you type a request, the model decides whether to consult a skill based on pattern-matching your words against the skill’s description. It’s probabilistic, not deterministic. There is no guarantee your skill fires.

I tested this. I wrote a CPO review skill with a carefully crafted description. Then I ran 20 different prompts that should obviously trigger it - “do a CPO review,” “give me a go/no-go assessment,” “poke holes in this product strategy.” The trigger rate? Zero. Out of 20 queries, the skill fired exactly zero times.

The workaround exists - you can invoke a skill directly with /skill-name - but that defeats the entire purpose of having an intelligent agent that knows when to use its tools.

Even with aggressive description optimization, the fundamental architecture issue remains. Pretending otherwise is dishonest.

Evaluation is almost non-existent

How do you actually know if a skill is better than no skill? This question should be the first thing anyone asks before installing one. Almost nobody does.

Until recently, there was no built-in way to benchmark a skill against baseline Claude Code output. You’d install a skill, use it for a week, and have a vague feeling that it might be better. Or worse. Hard to say. Vibes-based evaluation.

Why not just stick with a well-written system prompt in your CLAUDE.md? It’s simpler, always loads, doesn’t have trigger reliability issues, and is easier to iterate on. Skills win on modularity - you can swap them in and out without touching your core prompt - but anyone telling you they’re a slam-dunk upgrade over a good system prompt is selling something.

🔧 An effective Skill workflow

The latest Claude Code release shipped something that tackles trigger reliability and evaluation in one tool.