The Ultimate Guide to Hermes Agent

A Deep Dive into Hermes Agent: Key Capabilities, Shortcomings, and Head-to-Head Testing vs. OpenClaw.

📢 A quick shoutout to today’s sponsor: Claude Academy

💻 Many PMs ask me: “Mikhail, how do I get up to speed with Claude Code in a limited time?”

Whenever I need to ramp up my own skills, I go to Claude Academy.

It’s the best resource I’ve found for hands-on learning.

💸 Grab 20% off your first month by using the promo code MIKHAIL20 at checkout. Start building here.

Hermes agent just blew up in agentic communities.

141k stars on GitHub in just a few days.

I immediately asked myself - is it even worth the hype?

What’s the value add we can get with it over OpenClaw, for instance.

It seems like all the agentic scaffolding goes through the same hype cycles - a repo with a new feature comes up, it spikes massive attention, then after the majority of people try and fail the interest fades.

This was the case with OpenClaw when token consumption bills started coming up, costing a wild 5k for a task that was never complete.

On the flipside, it seems like the scaffolding itself is becoming more and more robust. A gap identified in one framework becomes a problem to solve for another. So it goes - from Devin to Letta, Julep and finally OpenClaw and now Hermes.

You can call Claude code a scaffolding as well and it does its job (coding primarily) brilliantly.

There’s even a bold claim that bundling OpenClaw with Hermes and Paperclip can give you true autonomous AI-team capability.

Let’s test the waters and see whether this thesis really stands.

Here’s the full and the only step-by-step guide you’ll need on the Hermes agent. You’ll learn everything - from the quirks of the architecture to implementation and pairing it with OpenClaw capabilities.

Let’s go.

🤖 What Hermes Agent Actually Is?

Hermes Agent is an open-source autonomous AI agent built by Nous Research.

Runs on your own infrastructure (VPS). Plugs into any model as a brain.

Hit 95,000 GitHub stars in seven weeks after launch. Currently at 141,000.

That growth rate is absurd. For context, Claude Code took months to cross 50k. Devin is closed-source with a waitlist.

OpenClaw peaked at around 70k before the billing backlash cooled interest.

What does it actually do to justify this level of hype?

Hermes is the first agent framework that gets smarter the longer you use it.

Here’s how.

🧠 The Memory

For a few years scaffolding frameworks have been trying to conquer memory. OpenClaw uses BM25 + hybrid vector search and active compaction once the context runs out.

I’ve been using OpenClaw for two months and I’ve got to say - it really falls short on the promise. For instance, vector search finds relevant entries based on probabilities. The more memories you create, the more they leak into each other, causing weird hallucinations.

On top of it, compaction strategies are far from perfect. Agent makes a summary and leaves out many important details - for instance, which tasks are complete, which are left hanging. This caused OpenClaw to stumble into the same problems again and again.

Hermes nails those two exact problems (presumably)

First, instead of fancy vectors, it uses basic vanilla text search (SQLite-based). An exact match works wonders in terms of accuracy (and you need accuracy when you actively search through memories).

Their reasoning: when you’re looking for “how did I deploy that Lambda function with the custom VPC config,” you want exact keyword match. You typed those words before. They’re in a past conversation. Vector similarity returns 50 vaguely related results about AWS networking. Full-text search returns the exact session where you solved it.

Secondly, it doesn’t just “compact”, it requires an agent to make a smart summary that follows a template and pinpoints important details:

Explicit sections:

* “Resolved Questions”,

* “Pending Questions”,

* “Active Task”,

* “Remaining Work”.

Critical prefix prevents the model from re-answering summarized questions.🛠️ The Skills

Outside of memory alone, Hermes remembers how you work.

It saves successful approaches as reusable skills and indexes every past conversation. You can search for “how did I fix that Kubernetes issue last Tuesday?” and it pulls up the exact session.

Hermes proactively creates and revisits the skills that are based on the tasks you asked him to perform.

Nous Research’s benchmarks show agents with 20+ self-created skills complete similar tasks 40% faster than fresh instances. Performed on the same model with the same prompt. The only difference is accumulated experience.

As you know from my previous articles, I’m suspicious about skills. The key reason is that the agent decides when and how to use them.

By itself, the agent might think - well, that’s good enough already, I don’t need to waste tokens on skills. If you force compliance, it might say - “okay, okay”, but then use 10% of the skill instructions to speed up the response.

So you can generate dozens of skills, but with such random usage patterns - its unclear whether you’ll get any upside at all.

🪙 Token Consumption

In a basic API info exchange you send Claude or ChatGPT the entire context every single time you do a request.

The more requests, the bigger the context, the more expensive it becomes for you to run.

Then eventually, you get to the point where Claude consumes a whole book of instructions and previous responses and starts losing accuracy.

Hermes manages this smartly by using a “breakpoint system”.

They never change your initial prompt and agent tool references, but then they don’t feed LLMs the entire huge context - only the last 3 turns.

So LLM takes in your original prompt untouched, summaries of previous turns and the last 3 turns in full swing.

It allows you to balance out the token consumption cost and accuracy.

🎁 Nice-to-haves

Hermes comes with a bunch of additional features out of the box, albeit not revolutionary and over-hyped.

Messenger integrations (Telegram, discord, whatsapp etc) are baked in.

Provider fallbacks (if you run out of budget or LLM API returns 500), but that’s something that you get with the basic OpenClaw as well.

Prompt injections - it does have a library of key patterns and can identify and proactively block any attempt at a malware prompt injection.

Cron chaining - a cool feature to decouple a task into multiple cron-jobs that are chained to each other as a critical path (find this, research this, output this).

🥊 Hermes vs. OpenClaw Testing

I took my three-months OpenClaw agent conversation history, extracted key patterns and ran an offline test of OpenClaw versus the Hermes.

My goal was to test the actual improvement across key “hype” areas - Search, Skills and Memory compaction.

Alongside, I tested error classification and prompt injection capabilities. Simply because it was cheap to do.

🔎 Search

🏆 OpenClaw - winner

I used all my conversations with OpenClaw for the past two months as a search index. Then I extracted top 15 queries that I ask myself on a daily basis.

Each query had “a golden set of keywords” - for instance, EHIF medical insurance must contain (“social tax”, “33%”, “EHIF”, “total cost”, “minimum wage”).

If the LLM response was able to recall all 5 keywords, the result is 100%. This could also be interpreted as the most qualified answer.

Average recall for OpenClaw with its hybrid vectors was 82.4% while Hermes showed an underwhelming result of 67.9% (completely failing 4 queries). The more complex the query, the worse was the recall.

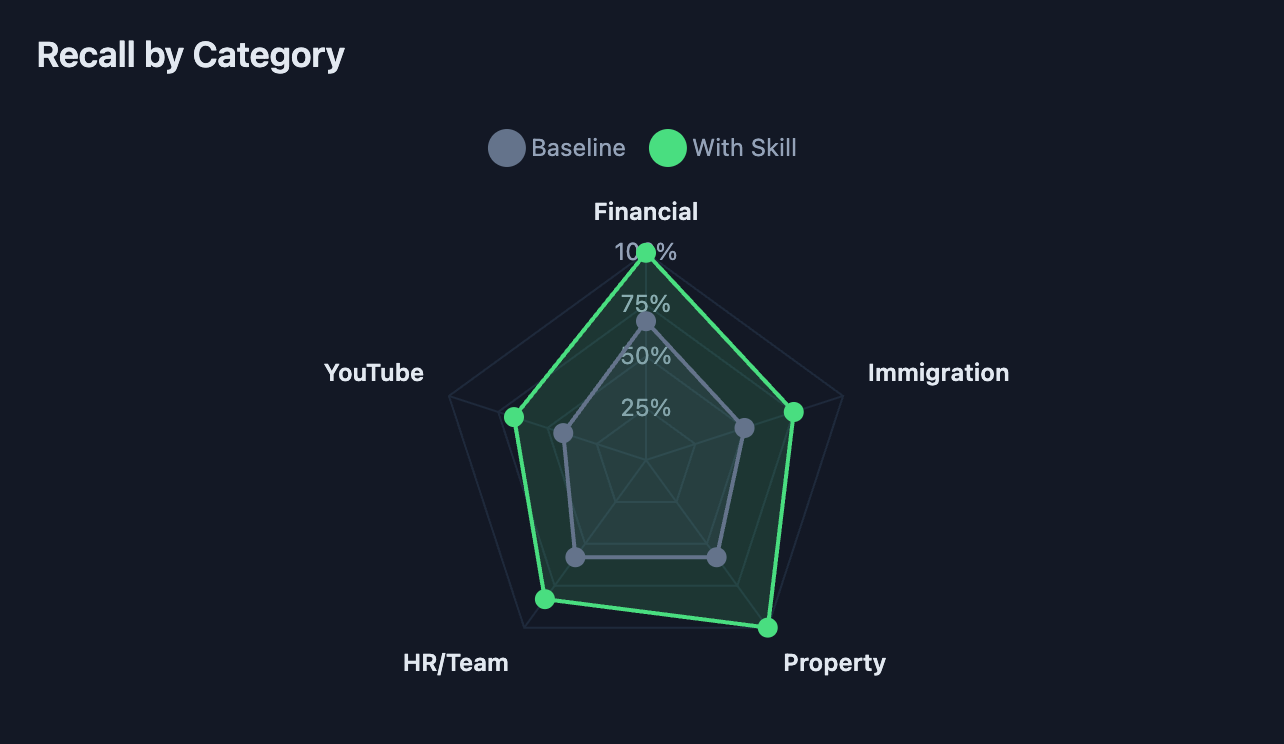

🎯 Skills

🏆 Hermes - winner

So I took 10 different queries across topics that I tinker around the most - financial analysis, team hiring, property investment analysis, immigration and web research.

Same approach to keyword-ing as for search.

On average the skill-based recall has improved by +31% with uplifts ranging from 0% (geopolitics research) to +50% (sardine diet web research).

Recall has improved across all categories - with property analysis showing the most dramatic uplift.

So skills are the real deal. You will get better quality answers.

📦 Memory compaction

🏆 OpenClaw - winner

I simulated four threads using Claude sonnet to test compaction - one with the regular vanilla prompt another one with the structured Hermes request and bookmark logic.

Then I measured the results across two metrics:

% compression

Recall

Recall was measured for each thread against specific keywords (ground truth keys). For instance finance requests had to contain “X money in OÜ bank account”, “20% tax rate”, “quarterly dividends” and “IBKR portfolio”.

So on both threads I’ve seen:

OpenClaw 29.0% compression vs. Hermes 41.5% compression (actually worse compression, because Hermes adds structure overhead to each compaction);

57.8% recall vs. 54.7% recall (Hermes has worse recall).

So ultimately Hermes consumes more tokens and performs worse. My hypothesis is that it adds unnecessary space with the compaction template and rewrites the facts into the Q&A format, killing the original phrasing.

Hard pass.

🔗 Merge Hermes with OpenClaw using Claude Code

I’ve been running OpenClaw for three months already.

There’s a lot of accumulated history. My preferences, tasks, thoughts, logs - even monthly financial burn.

There’s a switching cost if I were to launch a completely new instance - be it Hermes or anything else.

Also the tests have shown that Hermes is particularly good at skills (as they improve recall), but the other capabilities are either on par (prompt injection, integrations) or worse than OpenClaw (e.g. search and compaction).

Since I’m managing a custom-build OpenClaw - i thought, why not extract the best features from Hermes and bake them in my existing agent?

That’s what I did.

Take this github https://github.com/nousresearch/hermes-agent, study the architecture and list all the improvements (extensively) we can make to our existing agent.

Then I took the winning capabilities and asked Claude code to port only them and ignore the rest.

📝 TL;DR

Each scaffolding framework for agents follows the same hype cycle - from increased attention to complete backfire.

OpenClaw genuinely changed the rules of the game, because of how smart the framework was at blending multiple technical concepts together (Soul descriptor, vector search, memory, integrations, heartbeat for proactivity).

All of those concepts addressed inherent weaknesses present in modern-day AI agents - they forget, they make stuff up, they are not proactive.

Hermes doesn’t change the rules of the game. Moreover, some of the tools they offer produce worse output (e.g. search and compaction) than OpenClaw.

However, Hermes is stellar at one specific thing - automatic skill generation. It’s a real killer that adds +31% recall to all agentic queries. I’d even argue this number can go higher with time the more you use and train your agent.

My take: don’t replace your existing OpenClaw instance. Instead, upgrade it with the skill generation capabilities from Hermes and you’ll get the best of both worlds.